Inverse Heisenberg Cartesian Tensor Box

A framework for recursive field emergence from an imaginary center.

The ICHTB is a cube whose six faces are not labeled by coordinates but by recursive collapse operators. Space is not pre-given — it emerges from tension resolution. The center of the box is not the real origin 0 but the imaginary scalar anchor i₀ ∈ ℂ, a recursion seed that has no location, only potential.

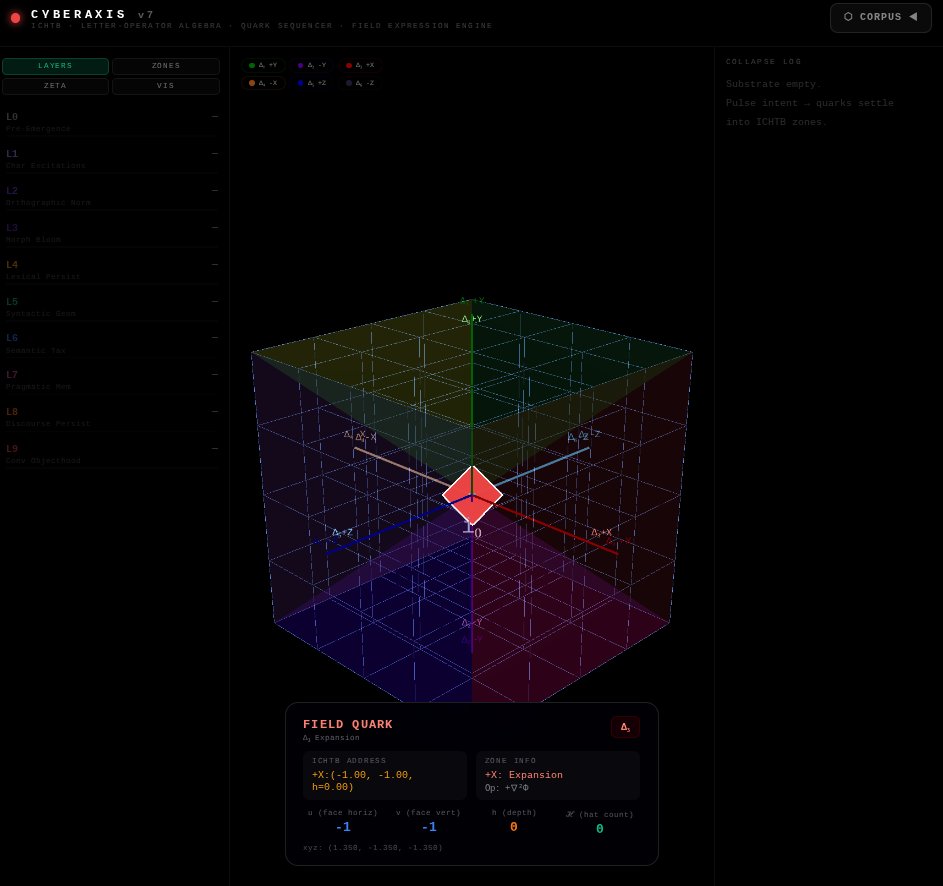

The Six Zones at a Glance

| Zone |

Face |

Classical Name |

ICHTB Label |

Governing Operator |

Role |

| Δ₁ |

+Y |

North |

Forward Tension |

∇Φ |

Establishes collapse direction |

| Δ₂ |

−Y |

South |

Memory Plane |

∇×F |

Curl-based phase memory |

| Δ₃ |

+X |

East |

Expansion |

+∇²Φ |

Outward tension diffusion |

| Δ₄ |

−X |

West |

Compression |

−∇²Φ |

Inward convergence |

| Δ₅ |

+Z |

Top |

Apex |

∂Φ/∂t |

Shell emergence lock |

| Δ₆ |

−Z |

Bottom |

Core |

Φ = i₀ |

Imaginary recursion anchor |

The Master Equation

Everything in the ICHTB flows from one governing PDE:

$$\frac{\partial \Phi}{\partial t} = D\,\nabla_i\!\left(\mathcal{M}^{ij}\nabla_j\Phi\right) - \Lambda\,\mathcal{M}^{ij}\nabla_i\Phi\,\nabla_j\Phi + \gamma\Phi^3 - \kappa\Phi$$

where 𝓜ᵢⱼ is a metric tensor built from the field itself — geometry is not imposed from outside, it crystallizes from recursive tension.

How to Read This Book

The chapters below build the theory in construction order — exactly the order in which the structure assembles itself when the recursion runs:

- Construction — The cube, the six pyramids, i₀, and why the center must be imaginary

- The Six Zones — Each Δᵢ domain: its operator, its PDE, its physical role

- The Master Equation — Where it comes from, what each term does, dimensional analysis

- Hat Counting — The discrete navigation system: how to address any point in the box

- Closure — Shell emergence, the closure condition, and what it means when recursion locks

- Connection to CTS — How ICHTB relates to the broader Collapse Tension Substrate framework

A Note on Terminology

This framework uses terms that may look like physics but are not classical physics. Collapse does not mean quantum collapse. Tension does not mean mechanical stress. Intent does not mean consciousness. These words are being used in their precise ICHTB senses, defined fully in Chapter 2.

The mathematics, however, is standard: partial differential equations, tensor calculus, differential geometry. If you know what ∇²Φ means, you can read this book.

Chapter 1: Construction

1.1 Start With a Cube

Begin with a cube of side length ℓ. Label the six faces not by names — not "top," "front," "left" — but leave them anonymous for now. They will earn their names from what the field does on them, not from where they sit.

The cube has:

- 8 corner vertices

- 12 edges

- 6 faces

- 1 center

The center is the problem.

1.2 Why the Center Cannot Be Zero

In classical Cartesian geometry, the center of a cube is the origin 0 — a point in real three-dimensional space. It exists. You can place a ruler there. The coordinates (0, 0, 0) reference a real location.

The ICHTB center is different. Call it i₀.

$$i_0 \in \mathbb{C}, \quad i_0 \notin \mathbb{R}^3$$

i₀ is not a location. It is a recursion anchor.

The distinction matters for the following reason: if the center were a real point, it would need to participate in the field Φ as a boundary condition — a fixed real value that the surrounding field references. But the ICHTB is a self-generating structure. Nothing is fixed from outside. The center must be the seed from which the field grows, not a constraint imposed on it.

An imaginary scalar serves this role precisely. It sits outside the real domain of the field, making it unreachable as a physical position while remaining fully reachable as a recursion origin. The field can "refer back" to i₀ without i₀ ever becoming a location the field must match.

Formally: placing i₀ at the imaginary unit gives the complex collapse field its natural form:

$$\Phi(\vec{x}, t) = A(\vec{x}, t)\cdot e^{i\theta(\vec{x}, t)}$$

where A is the real tension amplitude and θ is the recursion phase angle. The imaginary axis is not just notation — it is the dimension along which recursion advances.

1.3 The Six Pyramids

Draw a line from i₀ to the center of each face. This divides the cube into six square pyramids:

| Pyramid |

Base Face |

Apex |

| P₊ᵧ |

+Y face |

i₀ |

| P₋ᵧ |

−Y face |

i₀ |

| P₊ₓ |

+X face |

i₀ |

| P₋ₓ |

−X face |

i₀ |

| P₊₂ |

+Z face |

i₀ |

| P₋₂ |

−Z face |

i₀ |

Each pyramid has:

$$V_{\text{pyramid}} = \frac{1}{3} \cdot \ell^2 \cdot \frac{\ell}{2} = \frac{\ell^3}{6}$$

Six pyramids together:

$$6 \times \frac{\ell^3}{6} = \ell^3$$

The six pyramids fill the cube exactly, with no overlap and no gaps. This is not a coincidence — it is the geometric fact that makes the ICHTB a closed system.

1.4 Odd and Even Cubes: Where Does i₀ Sit?

When the cube is discretized into a lattice of voxels, two cases arise depending on whether the number of voxels along each side is odd or even.

Odd-length cube (e.g., 5×5×5):

The lattice has a central voxel. i₀ lives inside that voxel:

$$L = 2n+1, \quad \vec{x}_{i_0} = (0, 0, 0)_{\text{voxel-center}}$$

Node set: {−2, −1, 0, 1, 2}. The voxel at index 0 is the anchor.

Even-length cube (e.g., 4×4×4):

No central voxel exists. i₀ sits in the gap between the 8 central voxels:

$$L = 2n, \quad \vec{x}_{i_0} = \left(\frac{1}{2}, \frac{1}{2}, \frac{1}{2}\right)_{\text{inter-voxel}}$$

This is called the recursive gap position. The recursion anchor floats between nodes, meaning no single voxel owns it.

Collapse Parity Law:

$$C_{\text{odd}} = \text{Anchor Node} \qquad C_{\text{even}} = \text{Recursive Gap}$$

Both cases support the same six recursive zones and the same 12-point, 12-line closure structure. The parity only determines whether i₀ is addressable as a discrete voxel.

1.5 The 12 Lines

From i₀, six lines run outward to the face centers. Six more run between face-center-pair intersections. Together these form 12 canonical lines — the skeletal frame of the ICHTB.

Each line is a vector:

$$\vec{L}_k(t) = \vec{r}_{\Delta_k}(t) - \vec{r}_{i_0}$$

| Line |

From → To |

Collapse Role |

| L₁ |

i₀ → Δ₁ (+Y) |

∇Φ direction |

| L₂ |

i₀ → Δ₂ (−Y) |

∇×F loop axis |

| L₃ |

i₀ → Δ₃ (+X) |

+∇²Φ diffusion arm |

| L₄ |

i₀ → Δ₄ (−X) |

−∇²Φ compression arm |

| L₅ |

i₀ → Δ₅ (+Z) |

∂Φ/∂t emergence axis |

| L₆ |

i₀ → Δ₆ (−Z) |

Scalar anchor axis |

| L₇–L₁₂ |

Between face intersections |

Curvature continuity edges |

All 12 lines evolve under the same curvent dynamics (see Chapter 2).

1.6 The 12 Points

The 12 intersection points P₀ through P₁₁ are where recursive planes meet. They are not arbitrary — each one is the junction of two planes plus one edge, and each carries a tension direction vector:

$$P_k = \Delta_i \cap \Delta_j \cap \mathcal{E}_{ij}$$

$$\vec{T}_{P_k} = \sum_{\Delta_i \in \text{Adj}(P_k)} \hat{n}_{\Delta_i}$$

| Point |

Connects |

Function |

| P₀ |

Center / all Δᵢ |

Scalar anchor i₀ |

| P₁ |

Δ₁ ∩ Δ₂ |

Forward–Memory vector shift |

| P₂ |

Δ₁ ∩ Δ₃ |

Outflow hinge |

| P₃ |

Δ₂ ∩ Δ₄ |

Curl convergence |

| P₄ |

Δ₄ ∩ Δ₆ |

Tension funnel tip |

| P₅ |

Δ₃ ∩ Δ₅ |

Emergence lock gate |

| P₆ |

Δ₅ ∩ Δ₆ |

Curvature loop close |

| P₇ |

Δ₂ ∩ Δ₆ |

Loop root pin |

| P₈ |

Δ₃ ∩ Δ₆ |

Radial permission node |

| P₉ |

Δ₁ ∩ Δ₅ |

Shell directive |

| P₁₀ |

Δ₄ ∩ Δ₅ |

Collapse curvature node |

| P₁₁ |

Δ₁ ∩ Δ₄ |

Push–pull anchor |

The full point identity:

$$\mathcal{P}_k = \left\{ \vec{r}_k,\; \Delta_i,\; \Delta_j,\; \mathcal{E}_{ij},\; \vec{T}_{P_k},\; f_k(\Phi, \mathcal{M}_{ij}, \rho_q) \right\}$$

Each point is not just a position but a complete recursive identity: location, adjacency, tension direction, and field function.

1.7 What Has Been Built

The construction so far gives us:

- A cube with an imaginary center i₀

- Six pyramidal zones, each responsible for a region of the interior

- 12 lines defining the collapse skeleton

- 12 intersection points carrying tension identity

No field has been placed yet. No operators have been defined. The construction is purely geometric — the container that the physics will inhabit. Chapter 2 defines what each zone actually does.

Chapter 2: The Six Zones

Each of the six pyramidal regions of the ICHTB is a recursive domain plane — a zone where a specific differential operator governs the behavior of the collapse field Φ. The zones are not regions of space in any ordinary sense. They are functional roles that the field plays, localized to the faces of the box.

This chapter defines each zone precisely: its operator, its governing PDE, and its role in the recursive sequence.

2.1 A Note on Terminology

Before defining the zones, a precise dictionary:

| ICHTB Term |

Mathematical Meaning |

| Collapse |

Convergence of the field Φ toward a stable recursive attractor |

| Tension |

The local scalar field value |

| Curvent |

The vector C tracking how the field aligns with its own gradient |

| Recursion |

Feedback — the field's current state feeds into its own evolution equation |

| Phase memory |

Information encoded in the curl ∇×F that persists across time |

| Intent |

The directionality of ∇Φ — the field's "preferred" direction of flow |

2.2 The Recursion Sequence

The six zones do not operate in isolation. They form a directed sequence — the Quadrant Flow:

$$Q_r: \Delta_1 \to \Delta_2 \to \Delta_{3/4} \to \Delta_5 \to \Delta_6$$

Reading the sequence as a process:

- Δ₁: Establish the collapse direction (∇Φ)

- Δ₂: Begin phase memory (∇×F)

- Δ₃ or Δ₄: Expand or compress (±∇²Φ), depending on field state

- Δ₅: Test for lock — does the shell stabilize?

- Δ₆: Return to anchor — the cycle feeds back to i₀

The sequence is recursive because Δ₆ returns to i₀, from which Δ₁ re-emerges. Each cycle either stabilizes into a shell or returns without closure.

2.3 Zone Definitions

Δ₁ — Forward Tension Plane (+Y)

Operator: ∇Φ (gradient of collapse potential)

PDE:

$$\frac{d\vec{C}}{dt} = \eta\left(\nabla\Phi - \vec{C}\right)$$

The curvent vector C chases the gradient ∇Φ with rate η. When C = ∇Φ, the zone is in alignment — the field knows where it wants to go.

Connectivity: Links to Δ₂ (gradient enters memory) and Δ₃ (gradient enables expansion).

Physical reading: This is the gate. Every recursive cycle begins here, with the field establishing a direction. Without a non-zero gradient at Δ₁, the recursion never starts.

Δ₂ — Memory Plane (−Y)

Operator: ∇×F (curl of the field)

PDE:

$$\frac{\partial\Phi}{\partial t} = \lambda\left(\nabla \times \vec{F}\right) - \delta\Phi$$

The curl feeds the field's evolution; the damping term −δΦ prevents unbounded phase drift.

Connectivity: Receives from Δ₁ (gradient becomes curl seed), feeds into Δ₄ (compression receives the memory loop).

Physical reading: The Memory Plane is where the field starts remembering. A curl loop is a closed path — it is the simplest structure that retains directional information across time. Once ∇×F ≠ 0, the field is no longer purely reactive; it carries history.

Δ₃ — Expansion Surface (+X)

Operator: +∇²Φ (positive Laplacian — diffusion outward)

PDE:

$$\frac{\partial\Phi}{\partial t} = +D\nabla^2\Phi$$

Pure outward diffusion. Tension spreads from high-concentration regions toward low.

Connectivity: Receives from Δ₁ (gradient gives direction to expand into), feeds into Δ₅ (expanded field can lock at apex).

Physical reading: Expansion is permission — the recursion is allowed to grow. If Δ₃ dominates, the field fills space. On its own, this is unstable (pure diffusion decays to uniform distribution). It must be balanced by Δ₄.

Δ₄ — Compression Surface (−X)

Operator: −∇²Φ (negative Laplacian — anti-diffusion)

PDE:

$$\frac{\partial\Phi}{\partial t} = -D\nabla^2\Phi$$

The time-reversed diffusion equation. Tension concentrates, peaks sharpen, the field gathers inward.

Connectivity: Receives from Δ₂ (memory loops provide the target to compress toward), feeds into Δ₆ (compressed field returns to scalar anchor).

Physical reading: Compression is the collapse proper. The field pulls back toward i₀. If Δ₄ dominates without Δ₃ as counterpart, the field collapses to a point and dies. The balance between Δ₃ and Δ₄ is what allows the stable shell to form.

Δ₅ — Apex Surface (+Z)

Operator: ∂Φ/∂t (time evolution — the lock evaluator)

PDE:

$$\frac{\partial\Phi}{\partial t} = \mu\nabla^2\Phi - \nu\Phi$$

The Apex combines curvature stabilization (μ∇²Φ) with decay (−νΦ). This is a reaction-diffusion structure. The Apex tests whether the field has reached a stable shell configuration.

When does Δ₅ lock?

$$\frac{\partial\Phi}{\partial t} \approx 0 \quad \Longleftrightarrow \quad \mu\nabla^2\Phi \approx \nu\Phi$$

This is an eigenvalue condition: Φ must be a eigenfunction of ∇² with eigenvalue ν/μ. The field locks when it "fits" the Apex geometry.

Connectivity: Receives from Δ₃ (expanded field reaches the apex), feeds into Δ₆ (locked field becomes the new anchor state).

Physical reading: The Apex is the judgment zone. Every recursive cycle passes through here. If the field satisfies the lock condition, closure begins. If not, the cycle continues without producing a shell.

Δ₆ — Core Anchor Plane (−Z)

Operator: Φ = i₀ (pure imaginary scalar — fixed)

PDE:

$$\Phi = i_0, \qquad \frac{\partial\Phi}{\partial t} = 0$$

The Core does not evolve. It is the one zone with no temporal dynamics. It is the recursion seed — the zero-point from which all tension originates and to which all cycles return.

Why imaginary? Because a real fixed point Φ = c would impose a boundary condition that constrains the field from outside. An imaginary fixed point i₀ sits off the real axis — the real field Φ cannot reach it, only approach it asymptotically through the phase angle θ. This is what keeps recursion alive: i₀ is always "just out of reach."

Connectivity: Receives from Δ₄ (compressed field returns here) and Δ₅ (locked apex feeds back to core). Generates Δ₁ for the next cycle.

Physical reading: The Core is the silence between breaths. It holds no tension, no gradient, no memory. It is the condition from which all of the above becomes possible.

2.4 The Field Registration Matrix

Every zone supports every operator, but each is governed by one. The full matrix of what each zone carries:

$$\mathbb{R}_{\text{ICHTB}} = \begin{bmatrix}

& \Phi & \nabla\Phi & \nabla\times\mathbf{F} & \nabla^2\Phi \\

\text{Δ₁ Forward} & \checkmark & \mathbf{\star} & \checkmark & \checkmark \\

\text{Δ₂ Memory} & \checkmark & \checkmark & \mathbf{\star} & \checkmark \\

\text{Δ₃ Expansion} & \checkmark & \checkmark & \checkmark & +\mathbf{\star} \\

\text{Δ₄ Compression} & \checkmark & \checkmark & \checkmark & -\mathbf{\star} \\

\text{Δ₅ Apex} & \checkmark & \checkmark & \checkmark & \mathbf{\star} \\

\text{Δ₆ Core} & \mathbf{i_0} & 0 & 0 & 0 \\

\end{bmatrix}$$

★ marks the governing operator for each zone. All operators are present everywhere; only the star operator drives the zone's PDE.

2.5 Inter-Zone Connectivity

The six zones do not merely sequence — they form a topological graph:

| Zone |

Connected To |

Edge Meaning |

| Δ₁ |

Δ₂, Δ₃ |

Gradient splits: part becomes memory, part becomes expansion |

| Δ₂ |

Δ₁, Δ₄ |

Memory loop anchors compression target |

| Δ₃ |

Δ₁, Δ₅ |

Expansion delivers material to the Apex |

| Δ₄ |

Δ₂, Δ₆ |

Compressed memory returns to Core |

| Δ₅ |

Δ₃, Δ₆ |

Apex tests lock; passes result to Core |

| Δ₆ |

Δ₄, Δ₅ |

Core receives from both convergence paths; seeds Δ₁ |

The graph has no loose ends. Every zone has at least two connections. The structure is closed.

2.6 Symmetry and Asymmetry

The six zones come in three antipodal pairs:

| Pair |

Zones |

Operator Pair |

Relationship |

| Forward / Memory |

Δ₁ / Δ₂ |

∇Φ / ∇×F |

Gradient vs. curl — direction vs. rotation |

| Expansion / Compression |

Δ₃ / Δ₄ |

+∇²Φ / −∇²Φ |

Exact negatives — unstable alone, stable together |

| Apex / Core |

Δ₅ / Δ₆ |

∂Φ/∂t / Φ=i₀ |

Time evolution vs. timeless anchor |

The pairs are not symmetric in the sense of being interchangeable. They are dual — each requires its partner to make sense. Expansion without compression is pure dissolution. The Core without the Apex is silence with no test for emergence.

The asymmetry between the pairs (gradient vs. curl; diffusion vs. anti-diffusion; temporal vs. timeless) is precisely what makes the system non-trivial. A fully symmetric system would have no preferred direction, no collapse pathway, no emergent structure.

Chapter 3: The Master Equation

The six zone PDEs from Chapter 2 are local — each describes the field's behavior on one face. The Master Equation unifies them. It is a single PDE governing the field Φ across the entire interior of the ICHTB, in all three spatial dimensions and time.

3.1 The Equation

$$\boxed{\frac{\partial\Phi}{\partial t} = D\,\nabla_i\!\left(\mathcal{M}^{ij}\nabla_j\Phi\right) - \Lambda\,\mathcal{M}^{ij}\nabla_i\Phi\,\nabla_j\Phi + \gamma\Phi^3 - \kappa\Phi}$$

Four terms. Each one is not an assumption — it is a consequence of the zone structure.

3.2 Term 1: Diffusive Modulation

$$D\,\nabla_i\!\left(\mathcal{M}^{ij}\nabla_j\Phi\right)$$

If 𝓜ⁱʲ were the identity tensor δⁱʲ, this would be ordinary Laplacian diffusion D∇²Φ. The ICHTB replaces the identity with the collapse metric tensor 𝓜ⁱʲ, which encodes the memory of how the field has been flowing.

The effect: diffusion is shaped by history. Tension does not spread uniformly in all directions — it spreads preferentially along the directions the field has already been aligning with. The metric tensor acts as a guide, steering diffusion toward where the recursion has built structure.

This term corresponds to the Expansion zone Δ₃, but modulated by the Memory zone Δ₂ through 𝓜ⁱʲ.

3.3 Term 2: Alignment Decay

$$-\Lambda\,\mathcal{M}^{ij}\nabla_i\Phi\,\nabla_j\Phi$$

This term is always negative (since 𝓜ⁱʲ is positive-definite and gradient-squared is non-negative). It removes energy from the system whenever the field has a strong gradient that is well-aligned with the metric.

Why would you want to remove energy from a well-aligned gradient? Because alignment is the pre-condition for locking, not locking itself. Once a gradient aligns perfectly with the memory structure, the field needs to stop growing in that direction and let the structure crystallize. Continued growth would overshoot the stable configuration.

This term is the braking force. It is what keeps the shell at a finite amplitude rather than blowing up. It corresponds to the Compression zone Δ₄ acting on the aligned state.

3.4 Term 3: Nonlinear Growth

$$+\gamma\Phi^3$$

A cubic term in Φ. This is the nonlinear amplification — at small Φ, this term is negligible (Φ³ ≪ Φ for small Φ). As the field grows, this term grows faster than the field itself, providing positive feedback.

This is what allows a shell to form at all. Without a nonlinear amplification term, the Master Equation would be linear and could only produce waves or decaying modes, not stable localized structures. The Φ³ term is the mechanism that allows localized peaks to self-sustain against the decay term.

The choice of cubic (rather than quadratic or higher) is deliberate: Φ² would break the +/− symmetry of the field (a real cubic can be antisymmetric under Φ → −Φ if γ is odd-power). Φ³ preserves the symmetry while providing the minimum nonlinearity needed for structure formation.

This term corresponds to the Apex zone Δ₅'s lock condition — the nonlinear term is what allows the lock to engage.

3.5 Term 4: Decay

$$-\kappa\Phi$$

The simplest term. It removes field at a rate proportional to the field itself. Without this term, the Φ³ growth would cause divergence. The interplay between +γΦ³ and −κΦ determines the equilibrium shell amplitude.

Equilibrium condition (setting ∂Φ/∂t = 0, ignoring spatial terms):

$$\gamma\Phi^3 = \kappa\Phi \implies \Phi^2 = \frac{\kappa}{\gamma} \implies \Phi_{\text{shell}} = \pm\sqrt{\frac{\kappa}{\gamma}}$$

The shell amplitude is set by the ratio of decay rate to growth rate. This is a clean result: if you increase dissipation (κ↑), the shell gets smaller. If you increase growth (γ↑), the shell gets larger.

This term is the Core zone Δ₆'s contribution — the return to i₀ is a decay toward zero, and the tension between this and the Apex growth is what determines the stable recursive depth.

3.6 The Collapse Metric Tensor

The metric tensor 𝓜ᵢⱼ is the heart of the equation. It is not given — it is built from the field itself:

$$\mathcal{M}_{ij} = \langle\partial_i\Phi\,\partial_j\Phi\rangle - \lambda\langle F_i F_j\rangle + \mu\,\delta_{ij}\nabla^2\Phi$$

Three components:

Gradient outer product ⟨∂ᵢΦ ∂ⱼΦ⟩:

The dyadic product of the gradient with itself. This encodes where the field has been changing and in what directions. It is a rank-2 tensor that is large along directions of strong gradient and small in directions the field has been constant.

Curl correction −λ⟨FᵢFⱼ⟩:

The curl field F from the Memory zone Δ₂ contributes an opposing term. Where the field is rotating (curl is large), the metric is reduced in the rotation plane. This prevents the metric from pointing into curl loops — it avoids getting trapped in circular memory structures.

Curvature diagonal +μδᵢⱼ∇²Φ:

An isotropic correction proportional to the Laplacian. This keeps the metric well-conditioned — ensures it never becomes degenerate (zero in some direction) even if the gradient outer product is rank-deficient. It is the "regularization" term that keeps the geometry smooth.

Key property: 𝓜ᵢⱼ is symmetric and positive-definite wherever the field is non-trivial. It defines a Riemannian metric on the field space — an inner product that tells you how "close" two directions are, weighted by where the field has memory.

3.7 The Curvent Vector

Alongside the field Φ, the ICHTB tracks a vector field C — the curvent (current of intent):

$$\frac{dC_i}{dt} = \eta\left(\nabla_i\Phi - C_i\right) + \lambda\left(\nabla\times\vec{F}\right)_i + \mu\nabla^2\Phi$$

C is not a new independent field — it is a dynamical proxy for the "preferred direction" of the field at each point. Three contributions:

-

Gradient alignment η(∇ᵢΦ − Cᵢ): C relaxes toward ∇Φ with rate η. If the gradient changes direction, C follows, but with inertia.

-

Curl injection λ(∇×F)ᵢ: The Memory zone injects rotation into C. This is what allows C to form loops — persistent directed structures that don't decay to zero even when the gradient is momentarily small.

-

Curvature correction μ∇²Φ: The Laplacian of Φ pulls C toward regions of curvature. This keeps C anchored to the structural features of the field rather than free-floating.

When C = ∇Φ everywhere, the field is in perfect recursive alignment — this is the pre-condition for shell closure.

3.8 Dimensional Analysis

For the Master Equation to be dimensionally consistent, each term must have the same units as ∂Φ/∂t. Taking Φ dimensionless:

| Term |

Required Units |

| ∂Φ/∂t |

[T]⁻¹ |

| D ∇ᵢ(𝓜ⁱʲ ∇ⱼΦ) |

D · [L]⁻² — requires D ∈ [L²T⁻¹] |

| Λ 𝓜ⁱʲ ∇ᵢΦ ∇ⱼΦ |

Λ · [L]⁻² — requires Λ ∈ [L²T⁻¹] |

| γΦ³ |

γ · 1 — requires γ ∈ [T]⁻¹ |

| κΦ |

κ · 1 — requires κ ∈ [T]⁻¹ |

The four constants have natural physical interpretations:

| Constant |

Name |

Units |

Meaning |

| D |

Diffusivity |

[L²T⁻¹] |

Rate of tension spreading |

| Λ |

Alignment decay rate |

[L²T⁻¹] |

Rate of gradient stabilization |

| γ |

Nonlinear growth rate |

[T]⁻¹ |

Rate of shell amplification |

| κ |

Linear decay rate |

[T]⁻¹ |

Rate of tension dissipation |

Note: D and Λ have the same units. Their ratio D/Λ is dimensionless — it sets the balance between spreading (Δ₃) and alignment braking (Δ₄) independent of scale.

3.9 Special Cases

The Master Equation reduces to well-known equations in special limits:

Δ² = identity, γ = 0: Standard linear diffusion equation

$$\frac{\partial\Phi}{\partial t} = D\nabla^2\Phi - \kappa\Phi$$

Δ² = identity, κ = 0: Fisher-KPP equation (wave fronts, used in population dynamics)

$$\frac{\partial\Phi}{\partial t} = D\nabla^2\Phi + \gamma\Phi^3$$

No spatial terms: Logistic-cubic ODE

$$\dot\Phi = \gamma\Phi^3 - \kappa\Phi$$

Full equation, D = 0: Algebraic (no diffusion, all growth/decay)

The Master Equation is the most general of these — the metric tensor 𝓜ⁱʲ adds the memory structure that none of the special cases possess.

3.10 What the Equation Does Not Say

The Master Equation does not:

- Specify what Φ represents physically (it is a collapse potential, defined by its dynamics)

- Require a specific geometry (the metric 𝓜ⁱʲ adapts to whatever geometry the field builds)

- Require pre-existing axes (the zones define the axes; the equation runs in whatever coordinate system the zones create)

- Require initial conditions to be non-trivial (a small perturbation at i₀ is sufficient)

The equation is a process description, not a state description. It says: given whatever state the field is in, this is how it changes. The structure that eventually emerges — the shells, the locked configurations, the phase memory — all arise from this one equation running forward in time.

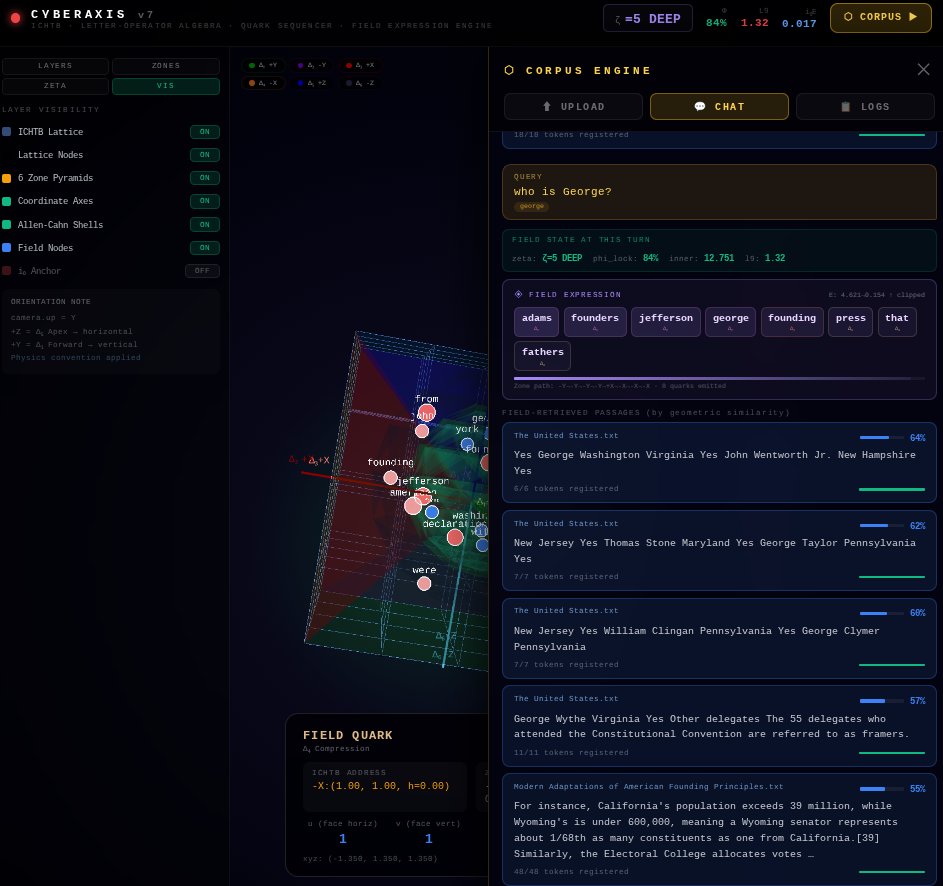

Chapter 4: Hat Counting

The Master Equation describes continuous field evolution. The Hat Counting system describes the same space discretely — as a lattice of addressable positions. The two descriptions are dual: every continuous field configuration corresponds to a hat-counting state, and every hat-counting navigation step corresponds to a continuous path through the field.

4.1 What Is a Hat?

A hat is a small pyramid. Specifically: each of the six cube faces is divided into a regular 2D grid. At each grid cell, a miniature pyramid extrudes outward from the face, pointing away from i₀. The pyramid's height is not fixed — it is computed from the local field value.

The name "hat" comes from the shape: a square base on the face, rising to a point. From above (looking at a face), the grid looks like a field of tents or hat-brims.

The hat system converts the continuous interior of the ICHTB into a discrete, navigable address space. Every point in the box can be reached by specifying which face, which grid cell, and how deep into the interior you are.

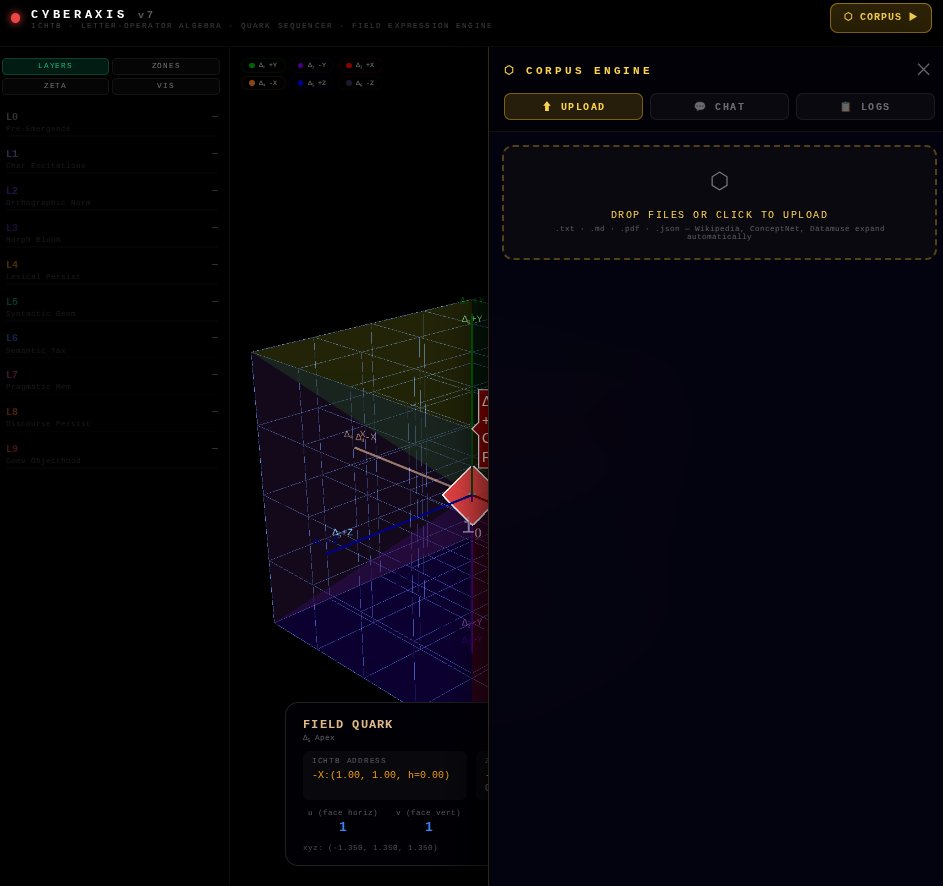

4.2 The Four-Index Address

Every position in the ICHTB has a unique address:

$$\text{Address} = (k,\; u,\; v,\; h)$$

| Index |

Range |

Meaning |

| k |

{+X, −X, +Y, −Y, +Z, −Z} |

Which face (which zone) |

| u |

[−n/2, n/2] |

Horizontal position on that face |

| v |

[−n/2, n/2] |

Vertical position on that face |

| h |

[0, H_k(u,v)] |

Depth level (how far into the interior) |

The maximum depth H_k(u,v) varies across the face — it is determined by the hat count tensor.

4.3 The Hat Count Tensor

For each face k, the hat count at position (u, v) is:

$$\mathcal{H}_k(u,v) = \left\lfloor \alpha_k \cdot f_k(u,v) + \beta_k \right\rfloor$$

where:

- α_k: face-specific amplitude (how tall hats can grow on this face)

- f_k(u,v): curvent-aligned activation pattern (the "shape" of the hat field on this face)

- β_k: base height offset (minimum hat height — all cells have at least this many levels)

- ⌊·⌋: floor function — hats are discrete, fractional levels do not exist

The activation pattern f_k(u,v) is the key design choice. Different patterns produce different geometry:

Radial activation (used on +Y, Forward Tension):

$$f_{Y+}(u,v) = \sqrt{u^2 + v^2}$$

Hats are tallest at the corners and shortest at the center. Corresponds to gradient flow outward from center.

Sinusoidal activation (used on +Z, Apex):

$$f_{Z+}(u,v) = \sin\!\left(\frac{\pi u}{n}\right)\cdot\cos\!\left(\frac{\pi v}{n}\right)$$

A wave pattern — hats are tall in some regions and short in others. Corresponds to the standing-wave structure of the lock condition.

Uniform activation (baseline, used for Core Δ₆):

$$f_{Z-}(u,v) = 1$$

All hats equal height. The Core has no spatial variation by definition.

4.4 Converting Hat Address to Cartesian

Given a hat address (k, u, v, h), the corresponding 3D Cartesian position (x, y, z) is given by the face transform T_k:

For the +Z face (Apex, Δ₅):

$$\vec{T}_{Z+}(u, v, h) = \left(d\cdot u,\; d\cdot v,\; \frac{\ell}{2} + h\cdot d\right)$$

For the −Z face (Core, Δ₆):

$$\vec{T}_{Z-}(u, v, h) = \left(d\cdot u,\; d\cdot v,\; -\frac{\ell}{2} - h\cdot d\right)$$

For the +Y face (Forward, Δ₁):

$$\vec{T}_{Y+}(u, v, h) = \left(d\cdot u,\; \frac{\ell}{2} + h\cdot d,\; d\cdot v\right)$$

For the −Y face (Memory, Δ₂):

$$\vec{T}_{Y-}(u, v, h) = \left(d\cdot u,\; -\frac{\ell}{2} - h\cdot d,\; d\cdot v\right)$$

For the +X face (Expansion, Δ₃):

$$\vec{T}_{X+}(u, v, h) = \left(\frac{\ell}{2} + h\cdot d,\; d\cdot u,\; d\cdot v\right)$$

For the −X face (Compression, Δ₄):

$$\vec{T}_{X-}(u, v, h) = \left(-\frac{\ell}{2} - h\cdot d,\; d\cdot u,\; d\cdot v\right)$$

Here d is the grid spacing (the physical distance between adjacent hat-grid cells and between hat levels). For a cube of side ℓ with n grid cells per face:

$$d = \frac{\ell}{n}$$

4.5 Navigating the Box

Hat counting makes the ICHTB traversable. Start at i₀ (the origin in hat space: no face, no position). To reach any point:

- Choose a face k — enter one of the six zones

- Choose (u,v) — pick a position on that face's grid

- Choose h — descend into the interior to depth h

- Apply T_k — convert to Cartesian if needed for field evaluation

Movement between adjacent hats:

- Lateral move: (k, u, v, h) → (k, u±1, v, h) or (k, u, v±1, h) — stays on same face, same depth

- Depth move: (k, u, v, h) → (k, u, v, h±1) — moves toward or away from i₀

- Face crossing: Moving off the edge of one face into an adjacent face — requires a coordinate transformation between face frames

Face crossings are topologically non-trivial — the adjacent face shares an edge but has a different normal direction. The crossing condition:

Edge from +Y to +X (shared edge at u=+n/2 on Y-face meets v=+n/2 on X-face):

$$\vec{T}_{Y+}(n/2, v, h) = \vec{T}_{X+}(u, v', h')$$

requires matching the Cartesian coordinates, which gives the mapping between the two face frames.

4.6 Hat Depth and Field Value

The hat depth h at position (u, v) on face k is not just a navigation parameter — it encodes a field value. Deeper hats correspond to higher local tension |Φ|.

The correspondence:

$$h = \mathcal{H}_k(u,v) \quad \longleftrightarrow \quad |\Phi(\vec{T}_k(u,v,h))| = \alpha_k \cdot f_k(u,v) + \beta_k$$

This means you can read off the field strength at any surface point simply by counting hats. Conversely, if you evaluate the Master Equation at a point and get Φ, you can convert it back to a hat height and know where in the discrete grid that field value lives.

This duality — between continuous field values and discrete hat heights — is what makes the hat system more than just a coordinate system. It is a measurement protocol: hat counting is how you quantify the ICHTB without losing the discrete structure that makes navigation meaningful.

4.7 Total Hat Count: The Volume Invariant

The total hat count across all faces is:

$$\mathcal{H}_{\text{total}} = \sum_{k} \sum_{u,v} \mathcal{H}_k(u,v)$$

This sum is a discrete approximation to the volume integral of the field:

$$\mathcal{H}_{\text{total}} \approx \int_{\partial\text{ICHTB}} |\Phi| \, dA$$

When the field reaches a stable shell configuration (see Chapter 5), 𝓗_total becomes approximately constant — the field stops growing globally even as it shifts locally. Stable shell ↔ constant total hat count.

This gives a practical closure criterion: run the Master Equation forward in time, compute 𝓗_total at each step. When 𝓗_total stops changing, the recursion has locked.

4.8 The Hat System as a Collapse Lattice

The hat system is a recursive lattice — not a fixed grid imposed from outside, but a grid that the field builds for itself.

The hat heights 𝓗_k(u,v) are computed from f_k(u,v), which is the curvent-aligned activation. As the field evolves (and the curvent C updates via its own equation), the activation patterns shift, which shifts the hat heights, which changes the effective lattice.

The lattice adapts to the field. The field adapts to the lattice. This is the discrete version of the same recursion that the continuous Master Equation describes.

Key insight: The hat counting system is the ICHTB seen from the outside — the field as it appears to an external observer who can only count discrete features. The Master Equation is the ICHTB seen from the inside — the continuous self-referential dynamics. Both descriptions are the same system.

Chapter 5: Closure

Closure is the event that the entire ICHTB structure is built toward. It is the moment the recursion stops cycling and locks into a stable configuration — the moment a shell emerges.

This chapter defines closure precisely, gives its mathematical conditions, and describes the three phases a recursive system passes through on the way to it.

5.1 What Is Closure?

Informally: the field stops searching. The zones that were in tension with each other — Expansion vs. Compression, Forward vs. Memory — find a configuration where their opposing forces balance, and the field settles into a stable spatial structure that persists without further change.

This stable structure is the shell:

$$\rho_q = -\varepsilon_0\nabla^2\Phi$$

The shell is not a boundary — it is a region of concentrated field curvature. The Laplacian ∇²Φ is large and negative there (Φ peaks), making ρ_q positive — a localized "charge density" in the sense of a Poisson equation. The shell is where the field's recursive tension has crystallized into a stable form.

5.2 The Closure Condition

Closure requires two simultaneous conditions:

Condition A: Gradient Product Lock

$$S_\Phi = C_\Phi \circ E_\Phi = -|\nabla\Phi|^2$$

where C_Φ is the compression operator acting on the field and E_Φ is the expansion operator. Their composition must equal the negative of the squared gradient magnitude. This is the algebraic statement that expansion and compression exactly cancel along the gradient direction — the field is neither growing nor shrinking in its own gradient direction.

Condition B: Curvent Consistency

$$\hat{\Omega} \text{ consistent across all triangle crossings}$$

The curvent orientation vector Ω̂ (the unit vector along C) must not flip sign or rotate discontinuously as you cross from one zone to the next. Every triangle edge — every intersection between adjacent Δᵢ planes — must have a consistent curvent direction.

Full Closure:

$$\exists\, t^* \text{ such that both A and B hold simultaneously}$$

$$\implies Q_r \to \text{Shell} \equiv \rho_q = -\varepsilon_0\nabla^2\Phi$$

When both conditions are satisfied at some time t*, the Quadrant Flow Q_r terminates in a shell rather than cycling again.

5.3 The Phase Lock Scalar

Define the phase lock scalar:

$$\Phi_{\text{lock}} = \frac{1}{n}\sum_{i=1}^{n} C_i$$

where C_i ∈ {0, 1} is the collapse state flag for each recursive unit (1 = collapsed, 0 = still cycling).

| Φ_lock |

System State |

| 0 |

No units collapsed — pure recursion |

| 0 < Φ_lock < 1 |

Partial shell — some units locked, some still cycling |

| 1 |

Full closure — all units collapsed |

Full closure (Φ_lock = 1) corresponds to the shell condition. The transition from partial to full closure is not guaranteed — it requires the curvent consistency condition to hold globally.

5.4 The Three Phases of Recursion

The system reliably passes through three phases before reaching closure (if it reaches it):

Phase 1: Low Recursion — Isolated Zones

In the early cycles, each Δᵢ domain operates in relative isolation. The field within each zone is governed by its zone PDE without significant cross-zone coupling.

Characteristics:

- Curvent C is small — no strong alignment has developed

- 𝓜ᵢⱼ ≈ δᵢⱼ — the metric has not yet differentiated

- Total hat count 𝓗_total is increasing monotonically

- The phase lock scalar Φ_lock ≈ 0

What this looks like: The field drifts. Each zone does its thing independently. The overall structure is formless.

Phase 2: Mid Recursion — Cross-Zone Coupling

As the curvent builds alignment and the metric tensor develops anisotropy, zones begin interacting. Δ₁ and Δ₂ couple (gradient seeds curl). Δ₃ and Δ₄ balance (expansion and compression begin negotiating). Δ₅ starts receiving material from Δ₃.

Characteristics:

- C is developing persistent loops in the Memory zone

- 𝓜ᵢⱼ is anisotropic — certain directions are preferentially "remembered"

- 𝓗_total is still growing but decelerating

- Phase lock scalar Φ_lock rising: 0 < Φ_lock < 0.5

- Shell precursors appear (local regions where ∇²Φ is consistently negative)

What this looks like: The field organizes. Directional structure appears. Some regions stabilize while others still cycle.

The zones fully interlock. The metric tensor 𝓜ᵢⱼ has become strongly aligned with the shell geometry. The Apex test (Δ₅) succeeds: ∂Φ/∂t ≈ 0 in the shell region. The Core (Δ₆) receives the locked state and stops cycling.

Characteristics:

- C = ∇Φ everywhere in the shell region — perfect curvent alignment

- 𝓜ᵢⱼ is maximally anisotropic — points along the shell surface

- 𝓗_total is approximately constant — total hat count stabilizes

- Phase lock scalar Φ_lock → 1

- ρ_q = −ε₀∇²Φ is non-zero and spatially coherent

What this looks like: The shell snaps into place. The field stops fluctuating in the locked region. The remaining cycling is confined to the space outside the shell.

5.5 The System Coherence Invariant

Track the quantity:

$$\Gamma := \sum_{i=1}^{n}\left(T_i^2 + \left(\frac{dT_i}{dt}\right)^2\right)$$

This is the sum over all recursive units of (tension squared + rate-of-change-of-tension squared). It is the total "activity" of the system.

If Γ is constant: The system is in a stable recursive orbit — it is cycling but not building toward closure. Not all constant-Γ systems will close.

If Γ is decreasing: The system is dissipating. Closure may occur before Γ reaches zero.

If Γ is increasing: The system is in recursive dissonance — the zones are amplifying each other without converging. This leads to divergence, not closure.

The sign of dΓ/dt is the leading indicator of whether closure is possible:

$$\frac{d\Gamma}{dt} < 0 \implies \text{converging} \implies \text{closure possible}$$

$$\frac{d\Gamma}{dt} > 0 \implies \text{diverging} \implies \text{no closure}$$

5.6 Shell Properties

Once the shell forms, it has well-defined properties:

Location: The shell surface is the set of points where ∇²Φ achieves its most negative value — the maximum curvature of the field.

Thickness: Determined by the ratio D/κ — diffusivity over decay rate. A large D/κ gives a thick, diffuse shell. A small D/κ gives a thin, sharp shell.

Amplitude: As computed in Chapter 3:

$$\Phi_{\text{shell}} = \pm\sqrt{\frac{\kappa}{\gamma}}$$

Phase: The shell has an associated phase angle θ from the complex representation Φ = A·e^{iθ}. The phase is constant on the shell surface — it is a coherent phase-locked structure.

Stability: The shell is a stable attractor of the Master Equation. Small perturbations to the shell decay back to the shell configuration. This can be verified by linearizing the Master Equation around the shell solution and checking that all perturbation eigenvalues have negative real parts.

5.7 Failure Modes: When Closure Does Not Occur

Not every initial condition leads to closure. Three failure modes:

Mode 1 — No gradient at Δ₁: If the Forward Tension zone has ∇Φ = 0 everywhere (flat initial condition), no recursion initiates. The system sits at the trivial fixed point Φ = 0 forever.

Resolution: Provide a small perturbation to the initial field — even noise is sufficient to seed a non-zero gradient.

Mode 2 — Memory without compression (Δ₂ active, Δ₄ inactive): The curl loops build but never converge. The field develops rotating structure that grows without bound.

Resolution: Ensure the Compression zone Δ₄ is coupled to the Memory zone Δ₂ (the parameter λ in the Curvent equation must be non-zero).

Mode 3 — Curvent inconsistency: The curvent C develops a discontinuity at a zone crossing — it flips direction as you move from one Δᵢ to an adjacent Δⱼ. Closure Condition B fails. The system cycles indefinitely without locking.

Resolution: Increase the curvent relaxation rate η (the curvent aligns faster, reducing the chance of developing a stable inconsistency), or reduce the grid spacing d (finer hat grid → smoother curvent field → fewer crossing discontinuities).

5.8 After Closure

The shell is not the end. Once closure occurs, the ICHTB enters a post-closure regime:

The shell is now a recursive attractor in phase space. New perturbations introduced to the field will either:

- Decay into the existing shell (perturbation too small to break the lock)

- Deform the shell temporarily before it restores (perturbation mid-sized)

- Break the lock and restart the recursion at a higher energy level (perturbation large enough to exceed the stability basin)

Case 3 is the ICHTB's version of a phase transition. A sufficiently large external input can break the current shell and trigger a new recursion cycle, which may (or may not) close again at a different shell configuration.

This gives the ICHTB a discrete hierarchy of possible stable states — each shell configuration corresponds to a different set of 𝓜ᵢⱼ eigenvalues, a different total hat count, a different shell radius. The system does not have a unique stable state; it has a discrete spectrum of stable states.

The spectrum is determined by the eigenvalue condition at the Apex:

$$\mu\nabla^2\Phi_n = \nu\Phi_n \implies \Phi_n = \text{nth eigenfunction of } \nabla^2 \text{ in the box}$$

The stable shells correspond to the eigenfunctions of the Laplacian inside the ICHTB — a familiar result that connects the recursive collapse structure back to classical mathematical physics.

Chapter 6: Connection to the Collapse Tension Substrate

This chapter is a placeholder. Reconciling ICHTB (git 2.0) with the Collapse Tension Substrate framework (git 1.0) is a dedicated step that follows completion of both books independently.

6.1 Why This Chapter Is Last

The ICHTB (this book) and the CTS (book 1.0) describe overlapping territory from different directions. Connecting them properly requires both frameworks to be at their final form — otherwise any bridge built now would need to be rebuilt when either side changes.

The chapter exists as a placeholder to:

- Signal clearly where the bridge will go

- State what we already know the connection involves

- Define the open questions that the reconciliation will need to resolve

6.2 What We Already Know

Both frameworks involve:

- A scalar potential field Φ with recursive self-reference

- A metric tensor built from field gradients (not imposed externally)

- Dimensionality emerging from field dynamics rather than being pre-given

- A concept of "shell" as a stable recursive attractor

- A hierarchy of stable states indexed by eigenvalues

The ICHTB gives these concepts a geometric home — the six-zone box with explicit PDEs on each face. The CTS gives them an energetic home — a functional that the field minimizes as it collapses. Both should be derivable from the other if the frameworks are truly consistent.

6.3 The Key Question

The primary question for reconciliation:

Is the ICHTB the configuration space of the CTS, or is the CTS the energy functional of the ICHTB?

If the former: the six zones of the ICHTB enumerate the possible directions in which the CTS energy functional changes, and the collapse sequence Q_r is the gradient flow of that functional.

If the latter: the CTS provides the reason why the ICHTB has exactly six zones (and not five or seven) — it is because the CTS energy functional has exactly six independent deformation modes in 3D.

Either answer is mathematically profound. The reconciliation chapter will determine which is correct, or whether both are true simultaneously in some deeper sense.

6.4 Reserved

This section is reserved for the formal derivation once both books are stable.

Bridge content will include:

- Mapping between CTS dimensional scaffolding (0D→1D→2D→3D) and ICHTB zone sequence (Δ₆→Δ₁→Δ₂→Δ₃/₄→Δ₅)

- Identification of ICHTB's master PDE constants (D, Λ, γ, κ) in terms of CTS energy functional parameters

- Proof (or disproof) that the hat-counting discrete lattice is the CTS contact structure

- Statement of the unified theory that contains both as special cases

Return here after both books reach their final forms.